This article is aimed at engineers, consultants or project managers to better provide an understanding of the issues that can occur in legacy Exchange archive migrations to G Suite and Google Vault (GV). We have recently completed a migration project for a large communications company where the project entailed moving their Exchange mailbox data stored in legacy enterprise vault archives to GVs, the product used to perform the work was TransVault Migrator.

These are the top five issues incurred and the solutions taken to make a success of the project.

One: Retaining user mailbox integrity for future delegate access

Now, there are some issues with migrating your data direct to GV, one of them being that if you later want to provide delegate access to a users’ GV data for discovery purposes, you effectively have to apply access to either the entire domain or specific organisational units (Understanding Vault Privileges), which means you provide access to data that user may not be entitled to see.

After a little digging around by the team we found a solution by migrating the data to a G Suite Mailbox and then once the data is migrated over, convert the G Suite Mailbox to a GV. Although there is additional admin overhead the benefit of migrating the data like this is that the GV can later be converted back to a G Suite Mailbox and delegate access provided.

Two: Understanding Licence limitations with the G Suite API

When moving archive content through the G Suite API the migration product requires a full G Suite licence to be applied, even if you were to migrate directly to GV. Now, the reason why I’m writing about this subject is our client had performed some preliminary investigation into the licence requirement, and believe that utilizing a VFE licence was sufficient, this, unfortunately, isn’t the case and took our client by surprise given they had enough VFE (Vault Former Employee) licences required to complete the migration and now they were faced with having to purchase full G Suite licences to migrate data.

There was no simple solution to this issue, and it ended up the client having to purchase 400 G Suite licences and running the migrations in batches. In our estimations, it ended up taking the project 54% longer in the processing time, so understanding what you need from a licencing perspective is critical and allows you to prepare either for a longer-term project or additional costs in licencing to increase the efficiency of the migration.

Three: Prolonged migration timelines due to the restriction imposed on the G Suite API

As part of any migration project, you want to migrate the data in the most efficient way, and sometimes this means knowing the limitations or restrictions of the platform you are migrating to. Within Exchange, you can manually set throttling policies and increase the limits to achieve better performance, same with Exchange Online by opening a Microsoft Support ticket. However G Suite has a strict limit imposed and the G Suite API effectively allows 1 message per second to each account being migrated (Email Receiving Limits), any more than this and the account could be locked out.

We handled this is by throttling the number of messages within the migration product that could be migrated, this proved effective and allowed a slower but efficient migration.

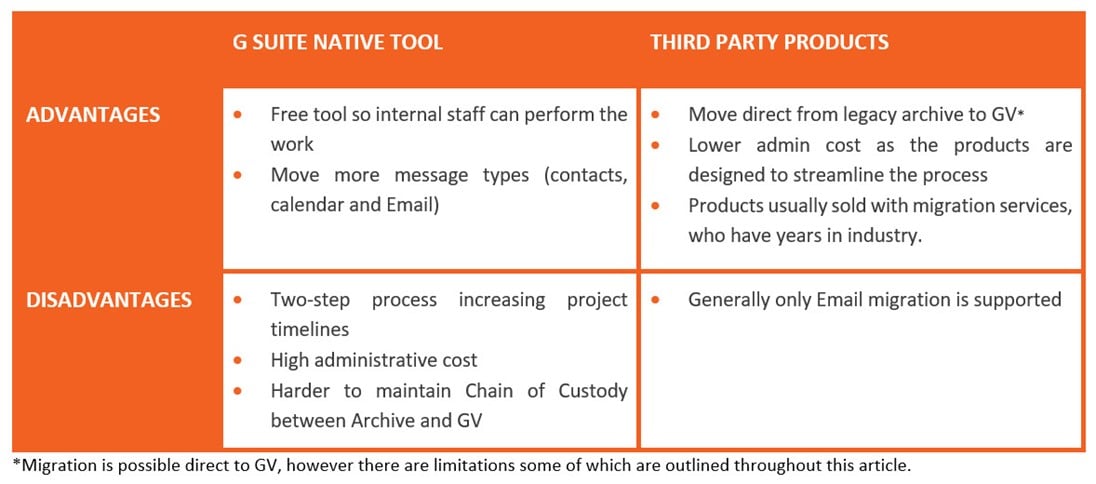

Four: Third-Party Migration products vs G Suite Native tools when migrating from Legacy archives

There are a few products out there that can migrate content to GV including a native tool provided by Google. Here I wanted to highlight some advantages and disadvantages, as it helped to understand what each technology offered and enables you to highlight the benefits of direct migration.

Most if not all third party products cannot migrate all archived content, in fact, some can only migrate Email, now although this may not be an advantage over the G Suite native tool, third party products are better streamlined for migrating legacy archives. This is due to the G Suite Native tool having to plugin direct to Email platforms that are supported or utilising PST as a source, which means migration from a legacy archive is a two-step process.

Five: Message fails to migrate to G Suite/GV due to non-SMTP

Legacy archives can contain messages where recipients have been recorded either as a display name or LegacyExchangeDN, which are all valid identifiers with Exchange. However, a solution like G Suite/GV won’t be able to translate those types of identifiers and requires a common identifier such as SMTP address to be in place as a recipient for the message to parse through the G Suite API.

Depending on the product used there are a different method of resolving this and in some, you can’t. With TransVault Migrator there are two methods of resolving the non-SMTP address. First is to import LDAP and enable conversion of addresses. This performs a check when recipients are parsed, identified as non-SMTP and a lookup is made to replace such identifiers like Display names and LegacyExchangeDN with the user’s current SMTP address. This is great for users that exist within an environment, but many organisations remove users from Exchange and Active directory over time. This leads us to the second step which handles non-SMTP address recipients by recording them in a CSV during a migration pass (Recipients CSV Found in the Installation directory of TransVault Migrator). Emails that fail to migrate can be reprocessed later once the non-SMTP address issue is resolved. To view how you create and import CSVs for address conversion we recommend using Appendix A in the TransVault Migrator Help Guide.

Recommend reading

The Train to Google Suite – Denis Kattithara